AI risk isn’t mostly about sci‑fi scenarios. It’s about the very normal moment a confident model output slips into a high-stakes decision—unchecked.

One number should stop any executive mid-scroll: 47% of business leaders say they’ve made major, high-stakes decisions based entirely on AI-generated content that wasn’t verified (Deloitte, as cited in the research brief). Nearly half. Not “used for brainstorming.” Not “helped with a draft.” Decided.

That’s the dark side that rarely makes the keynote slide. The risk isn’t that AI will become sentient. It’s that it already sounds certain enough to be obeyed.

And that certainty is contagious. The same Deloitte-cited summary reports 83% of AI users feel confident in reliability even though, in the cited summary, the tools are wrong more than one in three times. Confidence, in other words, is not an accuracy metric.

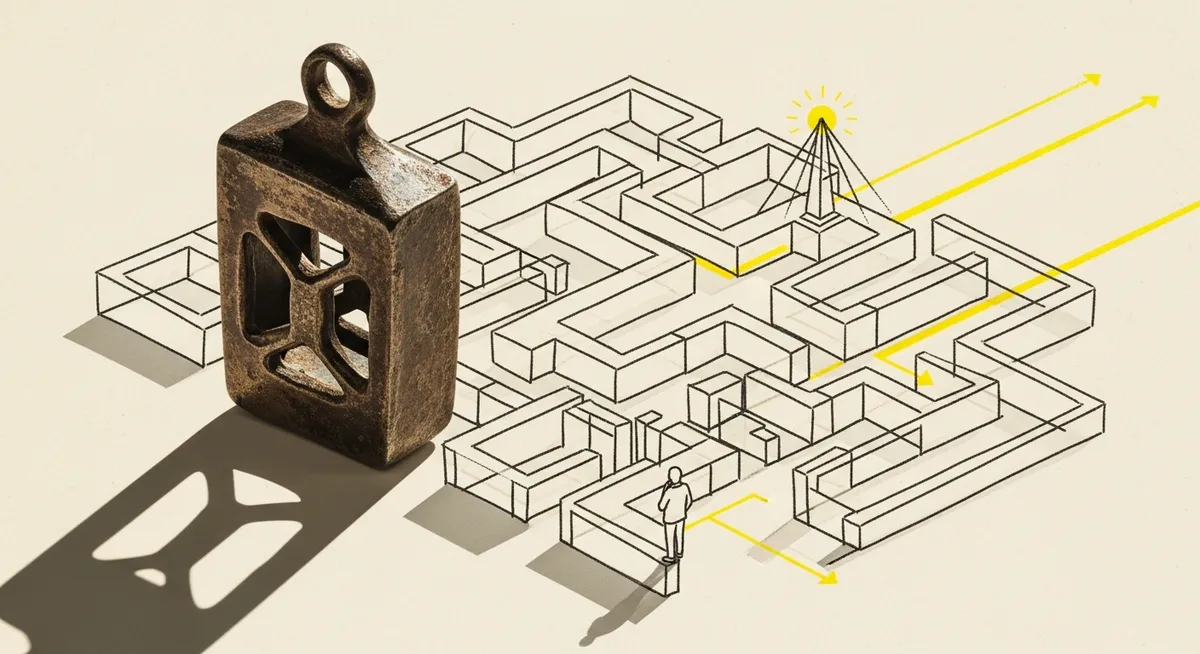

The uncomfortable question is simple: if smart teams are treating unverified output as decision-grade, what happens when AI becomes the default layer between a business and reality?

The hidden failure mode: automation bias looks like “moving fast”

A lot of AI risk talk focuses on model behavior—hallucinations, bias, deepfakes. But the more common failure shows up in people. Research cited in the brief from Microsoft and Carnegie Mellon suggests that higher user confidence in AI tools can reduce critical-thinking effort, increasing the risk of automation bias in decision-making.

That’s a subtle shift, and it’s why it’s so dangerous. Automation bias doesn’t announce itself. It looks like efficiency: fewer debates, faster approvals, lighter review. A team still “checks” the work, but the check becomes a skim. The output reads well. The numbers look plausible. The meeting moves on.

Seen from the other side, this is a demand gen problem, not just an IT or legal problem. When marketing teams use LLMs for positioning, SEO pages, competitive summaries, audience research, or sales enablement, the output quickly becomes upstream input for revenue decisions. Messaging choices, segmentation, spend allocation. Once those decisions are anchored on a confident error, the downstream systems do exactly what they’re designed to do: scale it.

Hallucinations aren’t a punchline when they hit the P&L

Hallucinations still get treated like a quirky demo moment—until they turn into real losses. Deloitte’s cited summary in the research brief attributes $67.4B in global business losses in 2024 to AI hallucinations. Even if the estimate is directional, the implication is concrete: hallucinations aren’t just “bad answers.” They’re a cost category.

But the more revealing detail is operational. The same Deloitte-cited summary claims 82% of AI bugs in production software stem directly from hallucinations. That’s a governance story wearing a technical mask. Bugs aren’t just model failures; they’re process failures: unclear decision rights, missing verification gates, and incentives that reward shipping over checking.

Here’s where the “dark side” gets quiet. In 2023, summarized reports in the research brief say inaccuracy in generative AI was cited as a primary concern—even more than cybersecurity or IP infringement in those findings—yet only 32% of organizations reported mitigating it. Awareness is high. Follow-through isn’t.

And governance basics are thin: the same 2023 summaries report only 21% of companies had policies for generative AI use. That gap—between concern and controls—is where risk compounds.

Black boxes create a new kind of accountability vacuum

Even when an organization wants to “be responsible,” AI often makes responsibility hard to assign. The research brief notes that many systems remain difficult to explain—black boxes—which complicates accountability and the ability to challenge decisions in domains like hiring, lending, and compliance (as summarized from expert commentary including Baylor University and Harvard sources referenced in search results).

That matters because businesses don’t just need answers; they need reasons. A pipeline forecast that can’t be explained becomes a political object. A lead-scoring change that can’t be justified becomes a fight between marketing, sales, and RevOps. A compliance decision that can’t be traced becomes a liability. When the rationale disappears, the organization starts arguing from authority: who approved it, whose tool it was, whose budget paid for it.

To understand why this is showing up now, it helps to go back to how quickly AI moved from “tool” to “colleague.” Teams didn’t redesign their controls first. They bolted models onto workflows that were already under pressure to produce more with less. The result is a new kind of operational risk: decisions made faster than the organization can explain them.

The risks marketers inherit: bias, privacy, and deepfake-grade trust erosion

Some risks are well-known in theory and messy in practice.

Bias is one. The research brief notes that bias in training data can produce discriminatory outcomes in processes like hiring, undermining trust and increasing legal and reputational exposure. It references the widely cited example of Amazon’s experimental hiring tool, often discussed as a cautionary case in how historical patterns can reappear as “objective” recommendations.

Privacy and consent is another. AI’s need for large-scale data raises risks when systems are trained on, or operate over, sensitive information—especially in areas like healthcare diagnostics or surveillance-like personalization (as summarized in the research brief). For demand gen leaders, the practical issue is less philosophical: what data is being sent to third-party systems, what is retained, and what gets re-used in ways a customer never agreed to?

Then there’s misuse. The brief highlights deepfakes and misinformation as immediate harms enabled by generative AI, creating fraud and brand risks. This isn’t abstract. Deepfake-grade content collapses the old shortcuts for trust—“I recognize the voice,” “the screenshot looks real,” “the email reads like them.” When authenticity gets cheap, verification becomes expensive.

One more data point puts the breadth in perspective: MIT’s AI Risk Repository catalogs 1,700+ risks across domains like privacy, security, and human-AI interaction (as cited in the research brief). That number isn’t meant to overwhelm. It’s meant to clarify the real situation: “AI risk” is not one risk. It’s a risk portfolio.

The practical response in 2026: treat AI like a system that needs controls

The good news is that this isn’t a lawless frontier. NIST released the AI Risk Management Framework (AI RMF) on March 30, 2023, and launched a Trustworthy AI Resource Center to support implementation (as summarized in the research brief). The framework’s value isn’t in buzzwords; it’s in forcing organizations to answer basic questions: Who is accountable? What gets measured? What happens when the system fails?

In practice, the better approach is boring—and that’s the point. Verification workflows for high-stakes outputs. Audit trails for what the model produced and what a human changed. Clear decision rights for when AI can recommend versus when it can decide. Monitoring for drift and recurring failure modes. Red-teaming for misuse paths. And policies that are short enough that people actually follow them.

That doesn’t make AI “safe.” It makes the organization less naive about what it’s doing.

The dark side of AI no one talks about isn’t that models sometimes lie. It’s that organizations are building habits around those lies—quietly, efficiently, and at scale. The promise in the opening statistic wasn’t about fear. It was about governance. If 47% of leaders have already made unverified, high-stakes calls from AI output, the next competitive advantage won’t be who adopts AI fastest. It’ll be who can still prove what’s true when the model sounds most certain.