If intent data is supposed to make pipeline more efficient, why do so many teams end up with more “surging” accounts and less qualified pipeline?

The constraint is brutal: even multi-signal scoring models still carry a false-positive benchmark of <50% (Intent Data Account Prioritization Statistics for 2026). That’s not a rounding error. That’s a warning label.

Meanwhile the upside is real. In 2026 benchmarks, intent-sourced meetings are cited at roughly $150–$400 versus $500–$1,200 for cold outbound meetings, with 20–40% of pipeline reported to originate from intent data programs (Intent Data Account Prioritization Statistics for 2026). So the question isn’t “should we use intent?” It’s: what’s the one move that turns intent from alert noise into a prioritization system?

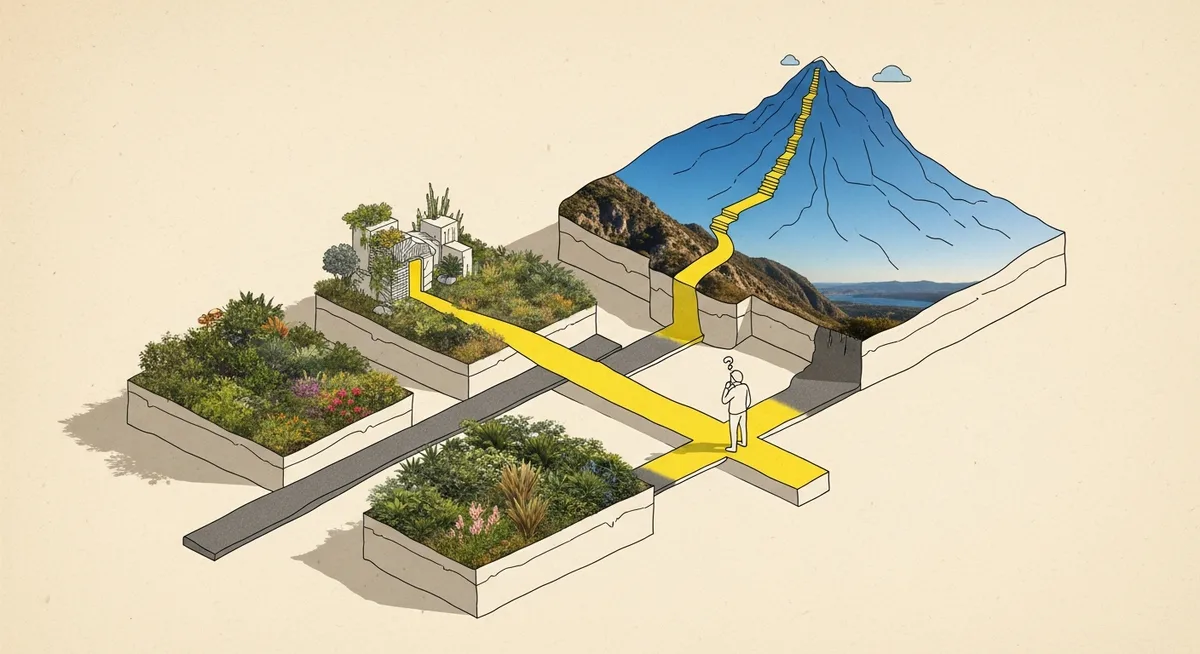

If you only change one thing, change this: stop ranking accounts by intent score alone. Build a layered model: ICP fit as the baseline, then intent as the overlay (Intent Data Account Prioritization Statistics for 2026). Everything else—SLAs, tiers, sequences—gets easier once that’s true.

Why this matters right now: speed and cost are compressing at the same time

In 2026, intent is no longer a “nice-to-have enrichment.” The market for B2B buyer intent data is cited at $4.5B with 15.9% CAGR (Intent Data Account Prioritization Statistics for 2026). That scale exists because leadership teams want the same thing: more qualified pipeline with less wasted effort.

But there’s a second force. Activation is shifting from days to minutes as workflows get more real-time and AI-assisted (Latest news on intent data trends in sales and marketing 2026). Benchmarks even cite <4 hours as time-to-first-touch after detecting hot signals (Intent Data Account Prioritization Statistics for 2026). That’s not “respond faster” advice. It’s an operating requirement.

Here’s the dissonance: teams feel pressure to move fast, yet the data itself can be wrong often enough to burn rep trust. And once SDRs stop believing alerts, adoption falls off a cliff. Mature teams reportedly see >80% daily SDR adoption (Intent Data Account Prioritization Statistics for 2026). Most teams don’t get that by adding more signals. They get it by adding guardrails.

The most common failure mode: a single score that nobody can explain

One-number scoring is seductive because it looks like clarity. It’s usually just compression. Fit, intent, engagement, stage—mashed into a single integer that can’t answer the only question an SDR cares about: “Why this account, today?”

This is where teams quietly lose incrementality. They take a list of “high intent” accounts, run outreach, and celebrate meetings—without proving those meetings weren’t already going to happen. Directional attribution from dashboards doesn’t fix that. It can’t.

The practical fix is not philosophical. It’s mechanical: make the model legible. Best-practice guidance calls out scoring by signal strength, relevance, recency, frequency, and account fit, plus defining explicit thresholds (Expert opinions on best practices for using intent data in B2B marketing). When those ingredients are missing, the system becomes a random-number generator with better UI.

And the fastest way to get it wrong? Acting on a single weak signal. The brief’s own false-positive benchmark (<50% even for multi-signal models) implies single-signal approaches can be worse (Intent Data Account Prioritization Statistics for 2026). That’s the part many teams learn the hard way.

The one tactic: a layered, tiered model with explicit thresholds (fit → intent → action)

This is the operator version of “intent is not a shortcut—fit first, then intent.” It’s one tactic with three steps. No magic. Just a system your RevOps team can defend and your SDR team can use.

Step 1: Lock the baseline with ICP fit (before you look at intent)

Start by scoring fit. Not because fit is perfect, but because it’s stable. Intent spikes are volatile; your ICP isn’t supposed to be.

Layered scoring—ICP fit as baseline, intent as overlay—is explicitly called out as a common 2026 approach (Intent Data Account Prioritization Statistics for 2026). Treat that as a guardrail: if an account is low-fit, it doesn’t matter how loud the intent looks. It goes to nurture, not to an SDR’s call queue.

When this is wrong: if the company is intentionally expanding into a new segment this quarter, “fit” may be yesterday’s map. In that case, update the ICP first. Don’t let intent become the excuse to avoid the decision.

Step 2: Define “hot” with an explicit threshold (and write it down)

Best-practice guidance recommends explicit thresholds—an example given is “3 high-intent actions in 7 days”—and scoring by strength, relevance, recency, frequency, and fit (Expert opinions on best practices for using intent data in B2B marketing). The exact numbers don’t matter as much as the fact that they exist.

Why? Because thresholds stop alert fatigue. They also make the inevitable trade-off visible: you will reduce volume before you improve quality. Good. That’s the point.

To understand why, it helps to zoom out to the economics. If signal-to-meeting conversion is only 5–15% (Intent Data Account Prioritization Statistics for 2026), then “more signals” is not automatically “more meetings.” Without thresholds, all you’ve done is increase the denominator.

Step 3: Operationalize with Tier 1/2/3 actions (so Sales and Marketing stop arguing)

Tiering is the bridge between a scoring model and actual work. A common model is: Tier 1 (high-fit/high-intent) for sales outreach; Tier 2 for nurture; Tier 3 for awareness (Expert opinions on best practices for using intent data in B2B marketing).

Tiering matters because it prevents the two classic mistakes at once: marketing sending everything to Sales, and Sales ignoring everything marketing sends. Intent becomes shared truth only when it changes the handoff.

Personalization also gets simpler. Guidance recommends tailoring outreach to the signal type—for example, competitor comparisons when competitive research spikes, and case studies when onboarding-style research shows up—and activating across channels (Expert opinions on best practices for using intent data in B2B marketing). Not fancy. Specific.

Run it this week: the minimum viable intent prioritization experiment

Here’s the 5-minute version you can run this week:

- Audience: 200–500 accounts from your current TAM list (or open opp + target accounts) with an ICP fit score already available in CRM/warehouse.

- Tools: Any intent provider and your CRM/marketing automation. The workflow matters more than the vendor.

- Owners: RevOps (scoring + routing), Demand Gen (nurture + paid), SDR Manager (SLA + adoption).

- Timeline: 14 days for signal collection + routing, 14–30 days for early pipeline indicators.

Setup: Create three fields (or properties): Fit Tier (A/B/C), Intent Threshold Met (Y/N), and Action Tier (1/2/3). Document the threshold in one sentence.

Launch: Route Tier 1 to SDR within an SLA aligned to the <4 hours benchmark for hot signals (Intent Data Account Prioritization Statistics for 2026). Tier 2 goes to a mapped nurture. Tier 3 stays in awareness.

The hypothesis (make it falsifiable): If we require ICP fit as a baseline and only promote accounts that meet a written intent threshold into Tier 1, then SDR-to-meeting conversion will improve (and cost per meeting will drop) because we’ll reduce false positives and focus outreach on in-market, high-fit accounts.

Readout: Don’t grade this on clicks. Grade it on movement and efficiency.

- Success = improved Tier 1 signal-to-meeting conversion versus your prior baseline (directional, but comparable) and early signs of cycle compression.

- Guardrails = Tier 1 volume doesn’t collapse below what your SDR team can work; Tier 2 engagement doesn’t crater.

- Stop-loss = if Tier 1 conversion doesn’t beat baseline after two threshold tweaks, pause automation and audit closed-won paths before iterating.

What to measure (and what not to over-interpret): use conversion lift, cycle shortening, and revenue influence as the north star (Expert opinions on best practices for using intent data in B2B marketing). Treat platform-reported attribution as directional, not definitive. If you can, add a holdout: keep a slice of high-fit accounts out of intent-based routing for two weeks and compare downstream outcomes. That’s how you get closer to incrementality.

The “don’t do this” list that saves rep trust

Three anti-patterns show up everywhere because they feel efficient. They aren’t.

- Don’t: route accounts to Sales on a single weak signal. Do: require multi-factor thresholds (Expert opinions on best practices for using intent data in B2B marketing).

- Don’t: copy generic benchmarks. Do: audit high-converting deals and reverse-engineer the paths in your own funnel (Expert opinions on best practices for using intent data in B2B marketing).

- Don’t: treat third-party intent as the full story. Do: combine first- and third-party signals where possible to improve accuracy and reduce noise (Intent Data Account Prioritization Statistics for 2026; Latest news on intent data trends in sales and marketing 2026).

One more thing—because it’s 2026 and privacy isn’t optional. Trends emphasize more consent-based and anonymized approaches, plus heavier reliance on first-party signals (Latest news on intent data trends in sales and marketing 2026). The trade-off is less granularity. The benefit is fewer governance surprises and more durable programs.

Intent data is everywhere now. The market made sure of that. The separator is whether a team can turn noisy signals into a legible decision system: fit first, explicit thresholds, tiered actions, and measurement that doesn’t confuse activity for lift. That’s how “47 surging accounts” turns back into something useful—a short list that a rep can work with confidence.