AI adoption in the US has a weird public narrative: either everyone is using it, or hardly anyone is. Both claims can be “true,” depending on which metric gets picked.

The Census Bureau’s business survey put firm-level AI adoption at 18% of US firms by late 2025. That sounds like a niche. But the Survey of Business Uncertainty paints a different picture: 78% of the US labor force works at AI-adopting firms, and 54% works at firms using large language models. Same country, same moment, two very different realities.

Here’s the pattern interrupt: the AI story in 2026 isn’t mainly about models getting smarter. It’s about management catching up.

Because adoption isn’t the bottleneck anymore. Operationalization is. A 2026 adoption trend report (as summarized in the research brief) says 63% of businesses reported fully operationalizing AI in 2026, up from 45% in 2025. And 86% of organizations reported productivity gains from AI (2026 productivity impact report, as summarized). Those are big numbers. They also hide a quieter problem: only 20% report mature oversight/governance for autonomous agents (2026 agentic AI governance finding, as summarized).

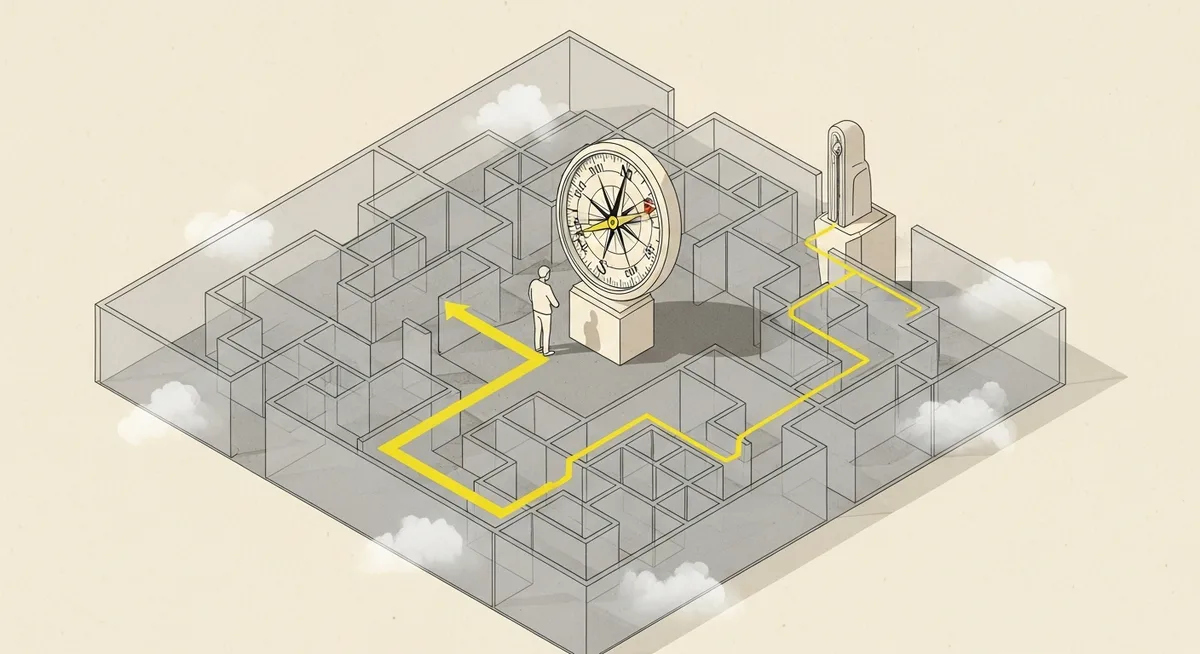

That gap—between rolling out autonomy and being able to control it—is where “management as AI superpower” stops being a slogan and becomes a job description.

The shift: from using AI tools to delegating work

Ethan Mollick’s January 27, 2026 write-up about an experimental University of Pennsylvania class offers a concrete view of what changes when AI is treated as labor, not software. Executive MBA students—many without coding experience—were asked to create a startup prototype in four days using tools like Claude Code, ChatGPT, Claude, Gemini, and Google Antigravity.

The result wasn’t just faster output. Students built working prototypes and moved through real startup motions: idea generation, market research, and iteration. Mollick notes the work was “significantly more” than what previous cohorts achieved in much longer timeframes. The important detail is why: the students didn’t suddenly become engineers. They became managers of capable (if inconsistent) digital labor.

In Mollick’s framing, delegation to AI can be thought of as a trade between three variables: the human baseline time for a task, the probability the AI succeeds, and the AI “process time” (prompting, waiting, checking, correcting). That last factor is where many teams get surprised. AI can generate an answer in minutes, but the evaluation can eat the hour.

And that’s the first management lesson of 2026: speed isn’t the hard part. Control is.

Governance is the missing layer (and it’s not optional)

Agentic AI raises the stakes because it moves from “drafting” to “doing.” Draft an email? Low risk. Change a price in a billing system, update CRM fields, trigger a sequence, or approve refunds? Now the system is taking actions that can create compliance exposure, security incidents, and revenue leakage.

Expert guidance on agentic AI management in the research brief converges on a governance-first posture: define risk thresholds, monitoring, escalation paths, and audit trails so agents can operate autonomously only inside explicit boundaries. Put differently, autonomy is earned. It isn’t granted.

One useful mental model from the same guidance: treat AI agents as privileged users or “digital workers.” That means identity and access management, immutable logs, and clear accountability—similar to how a serious organization handles human access to ERP, CRM, and finance tooling. Not because it’s fashionable. Because it’s basic operational hygiene when an entity can take action across core systems.

The uncomfortable point is that governance maturity is lagging while adoption accelerates. The research brief’s 20% “mature oversight” figure (as summarized) is a warning label, not a trivia fact. If the organization can’t answer who approves what, how exceptions are handled, and where audit logs live, then “agentic” becomes a synonym for “unguarded.”

But the context, however, is more complex. Governance doesn’t mean freezing everything behind committees. It means building a tiered system where low-stakes work flows freely and high-stakes work has brakes.

The management failure mode: layering agents onto broken workflows

Plenty of AI programs stall for a reason that has nothing to do with model quality. Experts cited in the research brief (including Deloitte’s framing as summarized) caution that implementations often fail when organizations layer agents onto existing human workflows instead of redesigning processes end to end.

This is where many demand gen teams will recognize the smell. Adding AI to a messy lead lifecycle doesn’t fix attribution. Dropping an agent into a chaotic campaign intake process doesn’t create strategy. It just produces more output—faster—inside the same bottlenecks.

The value, per the research brief’s expert consensus, comes more from orchestration than deployment: workflow ownership, exception handling, and multi-agent coordination. In practice, that means naming an owner for the entire arc of work (from trigger to outcome), defining what “done” means, and deciding what happens when the agent is uncertain. Ambiguity is where autonomy goes to die.

There’s a second-order effect here that executives often miss: when AI makes production cheap, the scarce resource becomes judgment. Taste. Prioritization. The ability to say no. This is also why “management training” shows up in Mollick’s story as the quiet advantage; the students’ edge wasn’t prompt tricks, it was scoping, feedback, and evaluation.

Org design in 2026: titles are easy, accountability is hard

Organizations are experimenting with leadership structures, including the rise of Chief AI Officer roles. The research brief cites 38% of organizations appointing CAIOs in 2026 (as summarized). The catch: reporting lines vary, and that inconsistency can block value delivery when ownership fragments.

That fragmentation matters because AI operationalization crosses functions by default. Security, legal, RevOps, finance, and line-of-business leaders all have legitimate veto power. Without a unified operating model, teams get stuck in a loop: pilots that look impressive, then months of waiting once the work touches production systems.

So the managerial superpower is not “AI enthusiasm.” It’s the ability to build a system where autonomy has boundaries, exceptions have paths, and outcomes have owners. Boring. Essential.

And it’s happening now. Investment intent is high—80% of firms projected/planning AI investments during 2026, with 30% of large firms planning to invest over $1M (2026 AI investment plans survey, as cited in the research brief). Money will keep flowing. The differentiator will be whether management can turn spending into repeatable operations.

Return to the adoption paradox at the top: 18% of firms using AI can sound like a slow rollout. But if 78% of the labor force sits inside AI-adopting companies, the competitive baseline has already shifted. The question for 2026 isn’t whether AI exists in the market. It’s whether the organization can run it like it runs people—clear authority, clear limits, clear accountability—before speed turns into sloppiness.