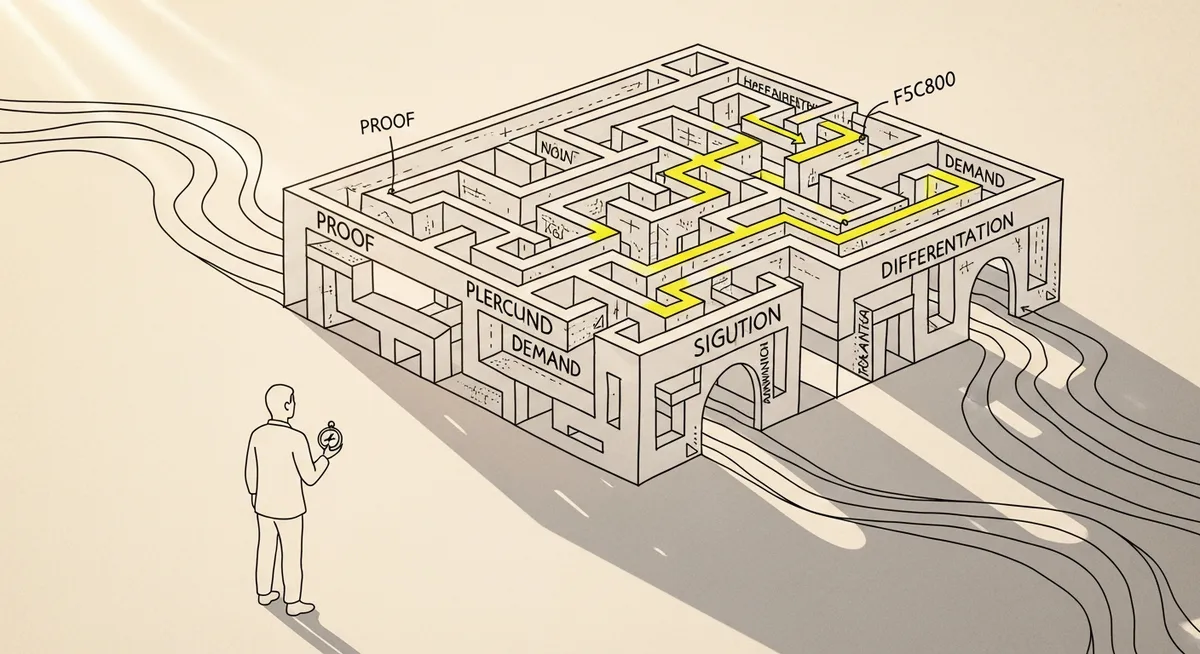

If branded search is rising but qualified pipeline isn’t, the constraint usually isn’t “more keywords.” It’s missing proof in the public record—where buyers (and AI summaries) form beliefs before they ever search.

That sounds abstract until you look at what’s changing in 2026: global consumer tech spending is projected to stay flat after growing 3% to US$1.3T in 2025 (Query 1 results). Flat markets don’t forgive weak differentiation. Share-shift becomes the job. And “proof” becomes a GTM input, not a brand nice-to-have.

Here’s the tension most teams feel but can’t quite measure: search is where demand gets captured, attributed, and celebrated. But the belief that makes someone search in the first place often forms somewhere else. Off-site. Zero-click. Sometimes inside an AI answer.

So the move isn’t “do less search.” It’s to stop treating search as the beginning of the journey and start treating it as the receipt.

The 2026 distribution problem: clicks are optional, evidence isn’t

Two data points from the research brief sit uncomfortably next to each other. Early 2026 reporting shows 68% of US consumers used AI tools in the past three months, and 19% used AI tools to discover or decide on tech products (Query 1 results). At the same time, zero-click behavior is real: 40.3% of U.S. Google queries in March 2025 ended with no click (Query 3 results).

Different years, same direction. Fewer guaranteed site visits. More decisions made on-platform, or at least influenced there. That’s not “SEO is dead.” It’s worse than that: the evaluation step is getting compressed into places marketers don’t fully control, and analytics won’t reward.

Now stack on Google’s own push. In 2026, Google Demand Gen campaigns are positioned as a shift from keyword-only capture toward AI-powered, visual, full-funnel demand creation across YouTube, Google surfaces, and connected TV (Query 3 results). Updates emphasize shoppability and measurability—like shoppable CTV ads and measurement approaches that include attributed branded searches (Query 3 results). That’s Google telling the market, out loud, that “search intent” isn’t the only intent that matters anymore.

But the important part isn’t the ad product. It’s the implication: if the buyer can be influenced (or satisfied) without clicking, then the unit of competition becomes the evidence that shows up where they’re reading, watching, and asking.

Define “public evidence” like an operator, not a philosopher

“Public evidence” sounds like a concept deck until it’s defined in measurable artifacts. In the research brief, experts describe public evidence—consumer surveys, perception studies, expert testimony—as influential because it reveals preferences, validates ideas, and informs pricing and marketing decisions (Query 2 results). And in legal contexts, courts can reject biased or methodologically flawed surveys (Query 2 results). Translation: evidence works when it’s rigorous, and it backfires when it’s sloppy.

For B2B demand gen, the operator definition is simpler: public evidence is any claim-supporting proof that is crawlable, quotable, and independently legible to someone who doesn’t trust your landing page copy.

Not vibes. Not “customer-first.” Proof.

And yes, it includes your site. But in 2026, it also includes the surfaces that buyers and AI systems consume without visiting you: reviews, forums, third-party comparisons, snippets, video transcripts, and the citations that models can repeat. Query 3 explicitly flags this dynamic: AI-powered search and AI summaries are changing click behavior, increasing the importance of on-platform presence and proof signals consumable without a site visit.

There’s another way to say it that RevOps teams tend to appreciate: public evidence is a leading indicator for future branded demand. Search volume is the lagging indicator.

One move: build a “Public Evidence Ledger” and ship one proof asset per week

This article only needs one tactic. Here it is: create a Public Evidence Ledger, then run it like a production system. One proof asset per week, shipped to the surfaces where decisions happen. Not buried in a PDF. Not trapped in a sales deck.

Step 1: Inventory your claims (and pick the one that actually carries risk). List the 5–10 claims that show up in positioning and sales conversations: time-to-value, accuracy, compliance posture, implementation effort, switching cost, reliability. Then pick one claim where disbelief blocks pipeline. One. Focus wins.

Step 2: Define what “counts” as evidence (and what doesn’t). A product screenshot is not evidence. A founder quote is not evidence. A single testimonial is weak evidence. Better options: aggregated outcomes (with methodology), transparent reporting, customer-perception data with sample details, or third-party references that can be checked. Borrow the legal mindset from Query 2: if a court would reject it as biased, a buyer will too—eventually.

Step 3: Publish it in a machine-readable way. Write the proof as a page with a clear claim, a clear method, and a clear number (or a clear limitation if the number can’t be shared). Then distribute it into places that get indexed and summarized: YouTube descriptions and transcripts, partner directories if they exist, review responses, and any high-authority third-party profiles you control. This is how you play into the zero-click and AI-summary reality flagged in Query 3.

Step 4: Tie it to a directional measurement plan. Don’t pretend last-click proves incrementality. Use directional attribution plus a holdout where possible. If you can’t do a holdout, at least pre-register what you expect to move and what you’ll treat as noise.

The hypothesis (make it falsifiable)

If we publish one rigorous proof asset per week for 6 weeks (method + numbers + limitations) and syndicate it to the surfaces that show up in AI summaries and zero-click results, then branded search and branded-assisted conversion rate will increase relative to baseline, because buyers will encounter credible, repeatable evidence before they reach the keyword stage.

What to measure (and what not to over-interpret)

Primary metric: branded search volume trend (directional) plus branded-assisted conversions (not last-click) over a 6–8 week window.

Secondary metrics: conversion rate on high-intent pages, sales cycle length for influenced opportunities, and win rate vs the segment where the claim matters most.

Guardrails: don’t let proof production cannibalize pipeline work. Cap weekly effort (for example, one marketer + one SME for a fixed block of hours). If paid distribution is used, watch CPA and downstream opportunity quality.

Stop-loss threshold: if after 6 weeks there’s no movement in branded search trend and no lift in branded-assisted conversions versus baseline seasonality, pause and reassess the claim selection or the distribution surfaces. The evidence may be true but irrelevant—or relevant but invisible.

Run it this week (setup, launch, readout, next test)

Setup (Days 1–2): Owner = demand gen lead; approver = legal/compliance (if needed); SME = product or CS. Pick one claim. Write a one-page “evidence spec”: claim, metric definition, data source, time window, exclusions, and what you will not claim.

Launch (Days 3–4): Publish a single proof page on your site with a plain URL and a tight structure: Claim → Method → Result → Limits. Repurpose into one short video script for YouTube (the transcript matters in a world of AI summaries). Post a condensed version to one third-party surface you already have presence in (review site profile, community post, or documentation page that gets indexed).

Budget range: US$0–US$2,000 is enough to start. If paid is used, keep it small and diagnostic. The point is signal, not scale.

Tools: whatever analytics stack exists today is fine, with one upgrade: a simple baseline tracker (weekly branded search trend, assisted conversions, and a note log of what shipped). Fancy dashboards don’t fix missing evidence.

Readout (Day 7): Don’t expect pipeline in a week. Look for leading indicators: increased branded impressions, more consistent brand-name mentions in inbound conversations, improved conversion rate on high-intent pages. Directional, not definitive.

Next test (Week 2): Ship proof #2, but change only one variable: either the surface (where it’s published) or the claim (what it proves). Not both. Otherwise, attribution becomes storytelling.

The trade-off is real: this will reduce volume before it improves quality if the proof forces specificity (and it should). Some prospects will self-disqualify. That’s not a bug. It’s unit economics.

Search will still capture what it captures. But in 2026—when AI-mediated discovery is rising (Query 1 results) and clicks are increasingly optional (Query 3 results)—the better bet is to treat public evidence as demand creation infrastructure. Publish the proof. Make it legible. Keep it alive. If you don’t shape the public record, something else will.