LLM referrals can convert unusually well—18% in one Search Engine Land analysis—yet they’re only ~0.13% of traffic. That mismatch is exactly why “which model works” is an ops problem, not a vibes problem.

One of the strangest numbers in marketing analytics right now is this: LLM-referred traffic can convert extremely well, yet it’s barely a rounding error in volume.

Search Engine Land summarized an analysis showing LLM referrals hitting an 18% conversion rate—higher than other sources in that dataset—while LLM referrals were only about ~0.13% of total traffic (and roughly 25x less than SEO or direct traffic). Microsoft Clarity, Bubblegum Search, and Amsive Research have reported similar patterns in different cuts of data: 3x conversion versus other channels (Clarity), ~2x better than organic search (Bubblegum Search), and 7.05% vs 5.81% conversion for AI-driven sessions vs organic search on high-traffic sites (Amsive).

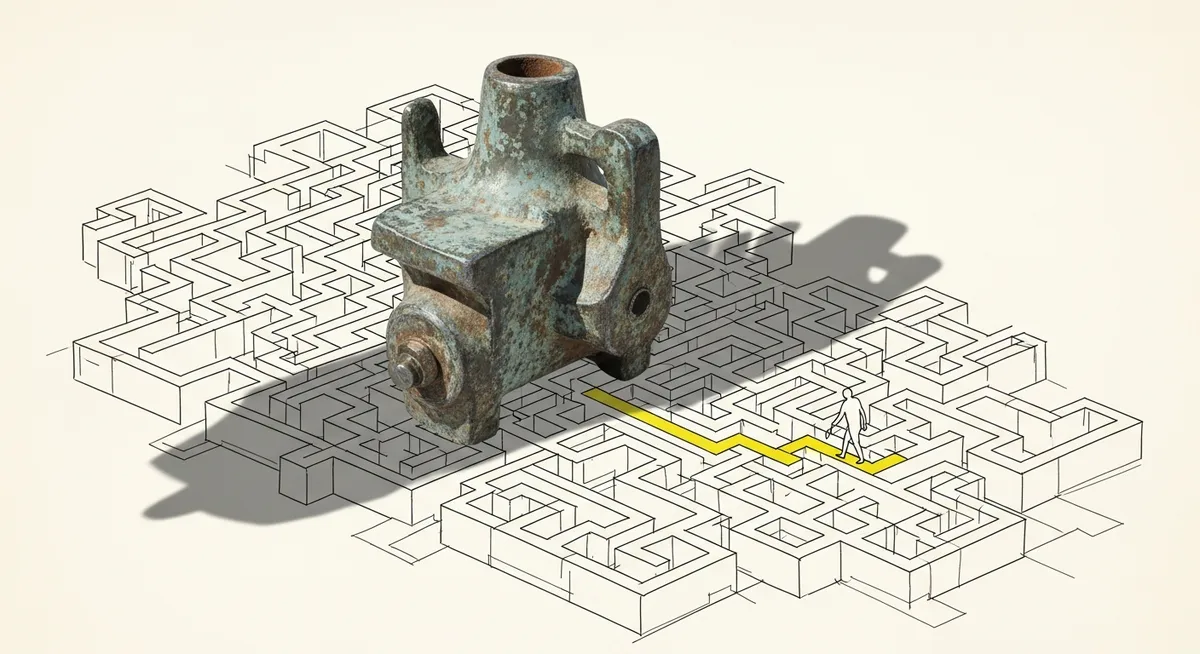

That’s the tension. High intent, tiny volume. And it leads to the question teams keep asking in 2026: Which LLM is actually working for us?

The tempting move is to pick a winner based on popularity—optimize for ChatGPT, Perplexity, Gemini, and hope the rest follows. But the more reliable approach is duller, more operational, and far more defensible: define the use case, benchmark it, pilot it, instrument it, and keep re-checking it. Exactly.

Why this matters now: LLMs are moving from “traffic source” to “workflow layer”

LLMs aren’t only showing up as referrers. In 2026, the direction of enterprise deployments (as summarized in the research brief) is toward context-aware copilots embedded in day-to-day tools, predictive analytics that can explain forecasts in plain English, multimodal inputs, and agentic/multi-agent orchestration that executes multi-step work—paired with tighter governance and integrations as companies push from pilots to production.

So “which LLM works” is no longer a brand debate. It’s a systems decision that touches cost, privacy, reliability, and measurement. For a marketing ops leader, that’s familiar territory: define the contract of what the system must do, then validate it under real constraints.

And there’s another reason it matters: the upside is easy to overstate. Some studies show AI referrals convert roughly the same as SEO depending on site and methodology, which is a polite way of saying the averages are not a strategy. The only numbers that count are the ones in the property being managed.

Start “use-case first,” or the evaluation will collapse into model fandom

Multiple expert summaries in the brief converge on the same guidance: selection should be use-case first. Define the business problem and the repetitive, high-value tasks before touching a model leaderboard. It sounds obvious. It almost never happens.

Here’s what “use-case first” looks like in practice for demand gen ops, without pretending there’s one universal best model in 2026 (because the same expert summaries explicitly warn there isn’t):

- AI discovery / LLM referral measurement: Identify LLM sources in analytics, segment them cleanly, and measure conversion and assisted conversion separately from SEO/PPC.

- Content and visibility workflows: Drafting, summarization, FAQ generation, and on-page changes tied to a governed approval process.

- Ops automation: Classification, routing, enrichment, and analysis tasks where latency and auditability matter as much as “quality.”

But the key is not the category. It’s the acceptance criteria. The best approach—actually, let’s rephrase—the most practical approach is to write down what “working” means in metrics the business already respects: conversion rate, qualified pipeline influence, time-to-publish, error rate, latency, and total cost of ownership (TCO).

Then pick candidates. Not the other way around.

Benchmarks are necessary; pilots decide the winner

General model rankings are comforting because they’re simple. They’re also a trap. The research brief’s expert guidance recommends task-specific benchmarks and real-world pilots (including edge cases), with re-evaluation on a quarterly cadence to avoid performance drift.

That quarterly note matters more than it sounds. “Set it and forget it” fails quietly with LLMs: prompts change, tools change, vendors ship updates, and what used to be acceptable output starts missing the mark. Nobody notices until a stakeholder does.

A pilot that’s worth anything has three parts:

- A controlled task set (your real tasks, not generic tests): include edge cases that break naive prompts.

- A scorecard: accuracy/precision for the task, latency, cost per successful output, and compliance risk.

- Instrumentation: logging prompts/outputs where allowed, and making the workflow auditable enough to debug.

But the context, however, is more complex: the “best” model may not be the one that scores highest on quality. It may be the one that meets the threshold at a cost and governance level the organization can live with.

Domain-specific vs generalist, open-source vs proprietary: the tradeoffs ops teams can’t dodge

The brief draws a clean line that helps decision-making: for industry-specific work, domain-specific LLMs (DSLMs) can outperform generalist models on precision tasks (think regulated finance/compliance or coding benchmarks), while generalists can be better for hybrid workflows. That’s not an abstract debate; it changes how many models need to be supported and how routing works.

Many organizations end up with multi-model routing: send simple, high-volume tasks to a cheaper model, and reserve a stronger model for complex work. It’s a finance decision disguised as an AI decision.

Then comes the other fork: proprietary vs open-source. The expert summaries in the brief frame it as a balance across cost, privacy/compliance, customization needs, and vendor lock-in risk. Proprietary models may deliver frontier performance but raise concerns about data handling and cost at scale. Open-source options can offer customization and on-prem control, but increase operational burden.

Seen from the other side, this is what marketing ops has always done: choose between convenience and control, then document the consequences so the business doesn’t pretend it can have both for free.

What to take from the webinar trend: “prove it” beats “plan it”

The source webinar framing gets the core instinct right: don’t spread effort equally across every LLM because the conversation is loud. Prioritize based on performance by platform and industry, and tie that to outcomes stakeholders recognize.

DemGenDaily’s useful stance here isn’t to declare a single “best LLM.” It’s to push a repeatable evaluation loop: use-case definition, benchmark selection, pilot design, KPI dashboard (accuracy, latency, TCO, compliance), then quarterly re-checks to catch drift. Boring. Reliable. The kind of process that survives budget season.

And it closes the loop from the opening contradiction. If LLM referrals can convert at 18% in one analysis yet represent ~0.13% of traffic, the job isn’t to chase volume with guesswork. It’s to build measurement tight enough to notice the signal early, validate it quickly, and scale only what actually holds up under scrutiny.

That’s what “which LLM is working” really means in 2026: not which one is famous, but which one still performs when it’s wired into a real system—with real constraints, real governance, and real numbers on the dashboard.