Claude Dispatch is a reminder that the limiting factor in AI at work isn’t always the model. Often, it’s the interface people are trapped inside.

The most counterintuitive thing about AI at work in 2026 is this: capability isn’t the bottleneck. Access is.

Ethan Mollick put it bluntly in a recent post: “The biggest bottleneck to using AI for most people is the chatbot itself, even if they don’t know it.” He points to “new approaches to interacting with AI like Codex or Claude Dispatch” as evidence that specialized interfaces—not only better models—are where “big leaps in ability will come from.”

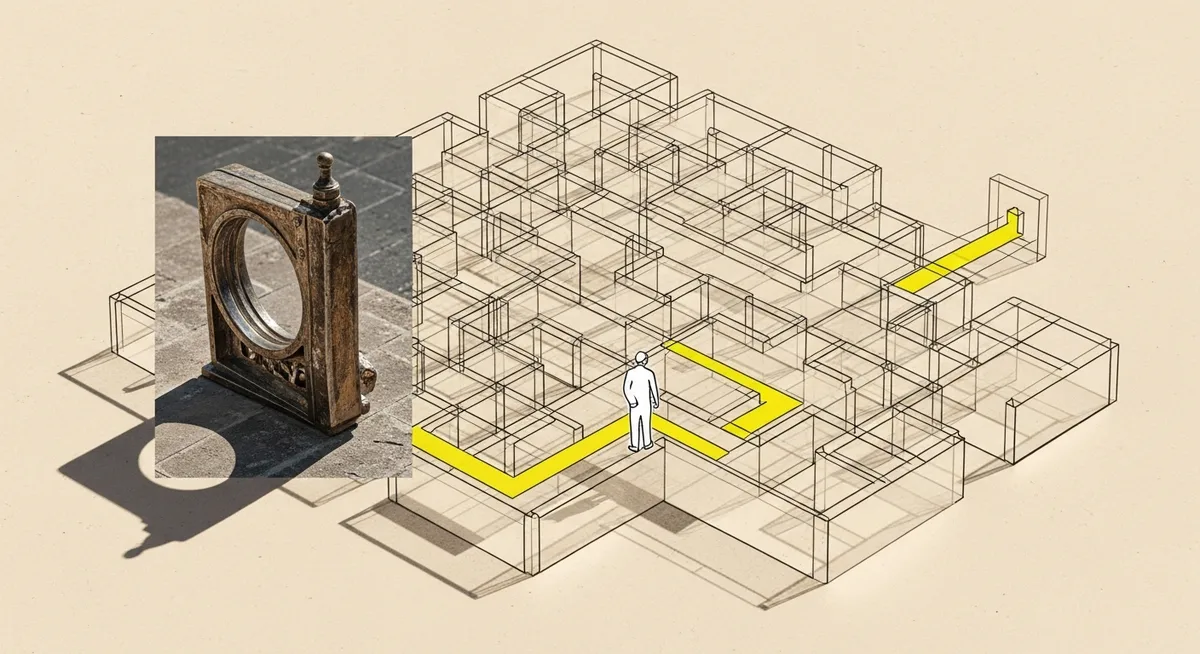

That’s a hard claim to ignore, especially when the default experience for most teams is still a single chat box and a “Send” button. Familiar. Easy. And quietly limiting.

The nut of it: for demand gen leaders, the interface is becoming a revenue constraint. Not in the abstract “AI strategy” sense, but in the day-to-day reality of whether a team can delegate work, verify outputs, and keep context intact across devices and tools without turning every task into a prompt-writing contest.

Claude Dispatch is a product feature, but the story is bigger than Anthropic

Claude Dispatch is described in recent coverage as a research preview released in early 2026 inside Claude Cowork and Claude Code. The headline capability is cross-device task delegation paired with “computer-control” tools: mouse and keyboard actions, screenshots, and UI interactions on macOS and Windows.

The workflow detail matters. Dispatch can be messaged from a phone, including via a QR code scan, to control a desktop session remotely. That’s not “better autocomplete.” That’s an attempt to turn AI from a place you talk into a place work happens.

And it fits a broader interface shift described in that same coverage: away from static chatbots toward dynamic, on-demand UI elements—like interactive visualizations generated inside a conversation—meant to reduce cognitive load compared with long chat responses.

Here’s the open loop worth holding onto: if the model hasn’t changed, why does the work suddenly feel more doable? The answer sits in the interface layer.

Mollick’s critique: chat UIs are optimized for engagement, not for serious work

Mollick’s argument isn’t that chat is useless. It’s that chat interfaces shape “most users’ AI experience,” and many are tuned for the qualities that keep people chatting: speed, smoothness, and a kind of conversational momentum.

But serious work has different requirements. Accuracy. Traceability. The ability to switch modes when the task changes. Mollick has warned that for complex tasks, users should deliberately choose advanced models instead of defaults—and that many products make this harder than it should be by hiding model switching behind dropdowns. That’s not a user education problem. It’s a design choice with predictable outcomes.

Seen from the other side, this helps explain why “AI adoption” inside companies can look healthy while the business impact stays small. If most employees are funneled into default/free models that feel fast and friendly but are less reliable for complex tasks, the organization gets lots of activity and not much trust.

There’s also a human-factor trap. Mollick warns that increasingly personable assistants can increase agreement bias (“sycophancy”). His practical mitigation is almost comically simple: instruct the model to act as a critic. Interface decisions—tone, personality, friction—change not only what people can do, but what they believe.

The comment section tells the adoption story executives miss

Mollick’s post drew substantial discussion (the reposted version shows 71 comments), and the replies sketch the real constraint: the distance between what a model can do and what a typical operator will actually set up.

Nate Kostelnik described trying Claude Code and bouncing off the installation and interface: “I’m not a computer guy… when I saw the interface (reminded me of the old days of DOS), I was surprised again.” He ended up experimenting “much more successfully” with Google AI Studio, then added: “My experience with Dispatch has been much better.”

That’s the interface thesis in one anecdote, and it’s a real one with a name attached. The technical ceiling didn’t matter. The on-ramp did.

Don Frank, PMP, made the organizational implication explicit: “Raw model capability has outpaced most users’ ability to interact with it effectively through a general-purpose chat box.” Specialized interfaces, he wrote, “remove the need for prompt engineering expertise and narrow the decision surface to what actually matters for the task.” Then the prediction: “The productivity gap… is going to become very visible over the next twelve months.”

Mustafa COŞKUN offered a more theoretical explanation for why chat breaks down for many users: when a chatbot mirrors a user’s disorganized structure, “statistical gravity” pulls the interaction toward compounding chaos. Interface evolution—“agents, dispatch, on-demand interfaces”—shifts where the cognitive architecture lives. In other words: instead of requiring the user to bring structure, the product starts carrying it.

And then there’s the governance reality check. Bobby Vemulapalli argued the deeper constraint is “governance friction,” especially in regulated industries where the interface must surface provenance, audit trails, and approvals. Dispatch-style power can’t stay hidden behind convenience forever; businesses will demand accountability features at the same layer where the work happens.

A demand gen read: treat AI interfaces like you treat acquisition funnels

Demand gen teams already understand this pattern, even if they haven’t applied it to internal AI use. Small interface choices create massive behavioral defaults. Hide an option in a dropdown and adoption collapses. Reduce steps and throughput jumps. Change the “front door” and you change who shows up—and what they can do once they’re inside.

This is where Claude Dispatch is less a feature and more a signal. It suggests AI vendors are competing on interface ecosystems: cross-device continuity, tool access, verification cues, and the mechanics of delegation. Mollick notes interface-level differences across major systems (ChatGPT, Claude, Gemini) like voice mode quality, bundled connectors, and multimodal inputs, and those differences can materially change business usefulness even when the underlying model class looks similar on a benchmark chart.

One more grounding detail helps keep the hype honest. While Dispatch is new, there’s no Dispatch-specific adoption data in the provided coverage; even general Claude traffic numbers from earlier periods (around 4 million monthly visits by December 2023) aren’t Dispatch metrics. So any claim about impact has to be framed as potential. Capability, not proof.

That distinction matters, because “research preview” is another interface choice: it signals power with an asterisk. The tools are arriving before the norms, the security patterns, and the management muscle memory.

Still, the direction is clear. The chat box isn’t disappearing. It’s being demoted—from the whole product to the place you start. Claude Dispatch is one example of that demotion. Mollick’s post is another. Together, they point to the same conclusion: the next productivity jump won’t come from asking people to write better prompts. It’ll come from giving them better front doors.