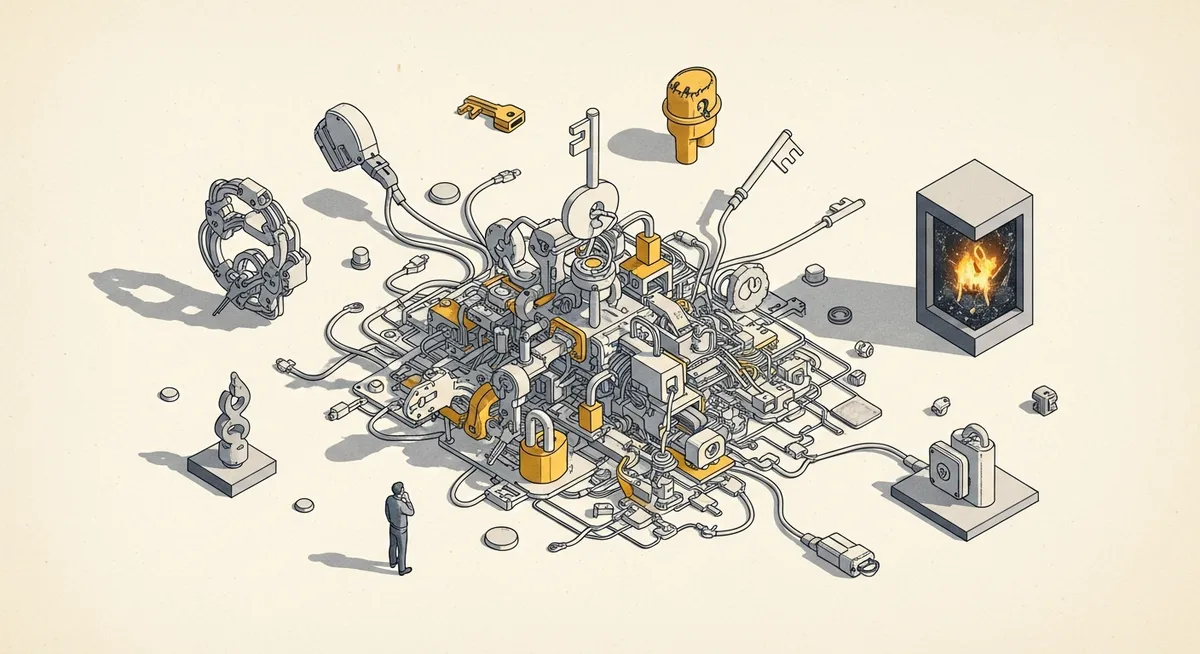

If an AI “agent” can execute across your GTM stack without a human review step, the upside is speed. The downside is also speed—just in the wrong direction. That trade-off is no longer theoretical in 2026, because agents are moving from assistive tools into revenue workflows (quote-to-cash, renewal motions, paid-to-pipeline handoffs) where mistakes don’t stay contained.

The adoption curve explains why the pressure is rising. One market projection summarized in the research brief pegs agentic AI growth from $5.25B in 2024 to $199.05B by 2034 (43.84% CAGR), with the enterprise segment projected from $2.58B in 2024 to $24.50B by 2030 (46.2% CAGR). The same summary claims 79% of organizations have some AI agent adoption and 96% plan expansion in 2025. Whether those exact figures land for every segment, the direction is clear: buyers are being pushed toward “more autonomous” by default.

But here’s the pattern interrupt: in GTM, more autonomy does not automatically mean more value. The safer path—often the higher-ROI path—is control first, autonomy second.

Nut graf: This matters now because agents are increasingly embedded inside systems of record. In April 2026, Salesforce demonstrated Agentforce Revenue Management aimed at automating quote-to-cash activities like cataloging, quoting, billing, product descriptions, and invoice explanations—explicitly framed around reducing DSO, compliance risk, and revenue leakage (per the research brief summary). Oracle also introduced sales and renewal agents spanning opportunity-to-cash, tying together SFA/CPQ/ERP/service data (again, per the brief). When “agentic” touches pricing, invoicing, and commitments, the GTM leader’s job changes: governance becomes a pipeline competency, not an IT afterthought.

Define the terms the way your RevOps team will feel them

For practical GTM work, “agentish” and “agentic” aren’t vibes. They’re operating models.

Agentish is automation that looks like an agent: it can recommend, draft, and even execute, but only inside a defined workflow with thresholds, approvals, and limited degrees of freedom. Humans still own intent and risk. That matches the source content’s framing that many revenue tech platforms behave this way today, with guardrails and approval steps.

Agentic is goal-driven, multi-step execution that can plan and act with minimal intervention—prioritizing leads, generating outreach, updating CRM fields, triggering sequences, and adjusting tactics as it goes (as summarized in the research brief). In the strongest version, it doesn’t just run the play. It rewrites the play.

The tension is obvious: GTM teams want throughput. Finance wants predictability. Legal wants defensibility. Sales wants something they can trust when the quarter gets weird.

Why “full autonomy” breaks first in revenue workflows

The core issue isn’t that models hallucinate. It’s that revenue processes multiply small errors.

The source content lays out the cascade: misclassify a lead and qualification rates change; mis-update a stage and the forecast shifts; hallucinate a signal and sellers chase ghosts. In isolation, those are annoyances. In a connected system, they become compounding variance—especially in pipeline management, where bias and data quality already distort reality.

And accountability doesn’t disappear just because an agent acted. CFOs, boards, and regulators still expect a straight answer: what changed, when, and why. Agentish designs are built for that reality: audit logs, explainability at the signal level, human-in-the-loop approvals, configurable thresholds. The source article calls these deployment blockers when they’re missing. That’s accurate in practice: if a system can’t explain and roll back, it can’t be trusted with commitments.

There’s another layer that gets ignored in vendor demos: revenue is a trust system, not just a workflow. Sellers trust their manager’s forecast. Finance trusts the pipeline math. Customers trust what your team promises. Uncontrolled autonomy introduces reasoning drift and unclear ownership. When that trust cracks, teams don’t “adapt.” They retreat to spreadsheets and side channels.

The research brief includes a related risk datapoint: EY estimates losses from misconfigurations up to 5% (as summarized). That’s not an “AI problem.” That’s a governance problem—and agents increase the blast radius of bad configuration because they execute more actions, faster.

The one move: set “controlled autonomy” guardrails before you expand use cases

If you only change one thing, change this: stop evaluating agent vendors by how autonomous the demo looks. Evaluate them by how well you can constrain and audit the autonomy you’ll actually deploy.

Here’s the 5-minute version you can run this week:

- Step 1 — Pick one workflow where errors are reversible. Good candidates: summarization, lead enrichment, routing suggestions, first-draft outbound. Avoid: pricing/discounting, legal language, invoice explanations, renewal terms. (Those can come later.)

- Step 2 — Write the sandbox using Objectives / Resources / Constraints. The research brief attributes this governance framing to BCG: define the objective (e.g., improve speed to lead), resources (which systems/data the agent can access), and constraints (brand rules, compliance rules, fields it can’t touch).

- Step 3 — Force an audit trail and an escalation path. Every action should be logged, and the agent should flag uncertainty for review (a transparency requirement echoed in the brief via MIT Sloan/SAP references). No log, no trust.

- Step 4 — Add a kill switch and a stop-loss threshold. Autonomy without a rollback plan is just risk. Decide in advance what failure looks like and who can pause execution.

The hypothesis (make it falsifiable): If we deploy an agentish-first workflow for inbound lead triage where the agent only recommends routing and summarization (not stage changes), then sales acceptance rate will increase within two weeks because reps will spend less time parsing context and more time on qualified follow-up.

Success = lift in sales acceptance rate (primary). Guardrails = time-to-first-touch and meeting set rate by segment (secondary). Stop-loss = if acceptance rate drops for two consecutive weekly readouts or if misrouting exceeds an internally defined threshold, revert to the previous routing rules and review logs.

What to measure (and what not to over-interpret): directional attribution is fine for early signal, but don’t call it incrementality from a dashboard alone. Use a holdout where possible: keep a slice of leads on the old process so the readout isn’t just “the model got better because we looked at it.”

Trade-off: this will reduce apparent “automation coverage” at first. That’s the point. You’re buying predictability before you buy speed.

When this is wrong: if the workflow is already tightly governed, reversible, and you have mature auditability (plus clean data), higher autonomy can be rational—especially in high-volume, low-judgment tasks. But revenue-critical systems should still earn that trust in layers.

Where agentic capabilities actually make sense in 2026

The research brief says 54% of agentic AI users apply it to sales/marketing (vs. 57% in customer service). That distribution matters: teams are already using agents where the cost of an error is lower, and where uncertainty can be escalated without breaking a contract.

So the near-term sweet spot looks like this (consistent with the source content): high-volume, low-judgment work; exception-based workflows with explicit escalation; preapproved plays with measurable criteria; ops and manager augmentation, not frontline replacement. Invoca’s March 2026 expansion into engaging and qualifying high-intent buyers across voice and SMS (per the brief) fits that pattern: it’s a conversion layer, not a pricing authority.

Autonomy should be earned. Not assumed.

That loops back to the opening constraint: if an agent can change the system of record without guardrails, you’re not scaling GTM. You’re scaling error propagation. In revenue, trust scales before autonomy—and the platforms and teams that act like that in 2026 will look “slower” right up until everyone else is busy explaining their numbers.