If your agent demo looks impressive but can’t survive contact with Salesforce, rate limits, and compliance, the constraint isn’t “prompt quality.” It’s the runtime.

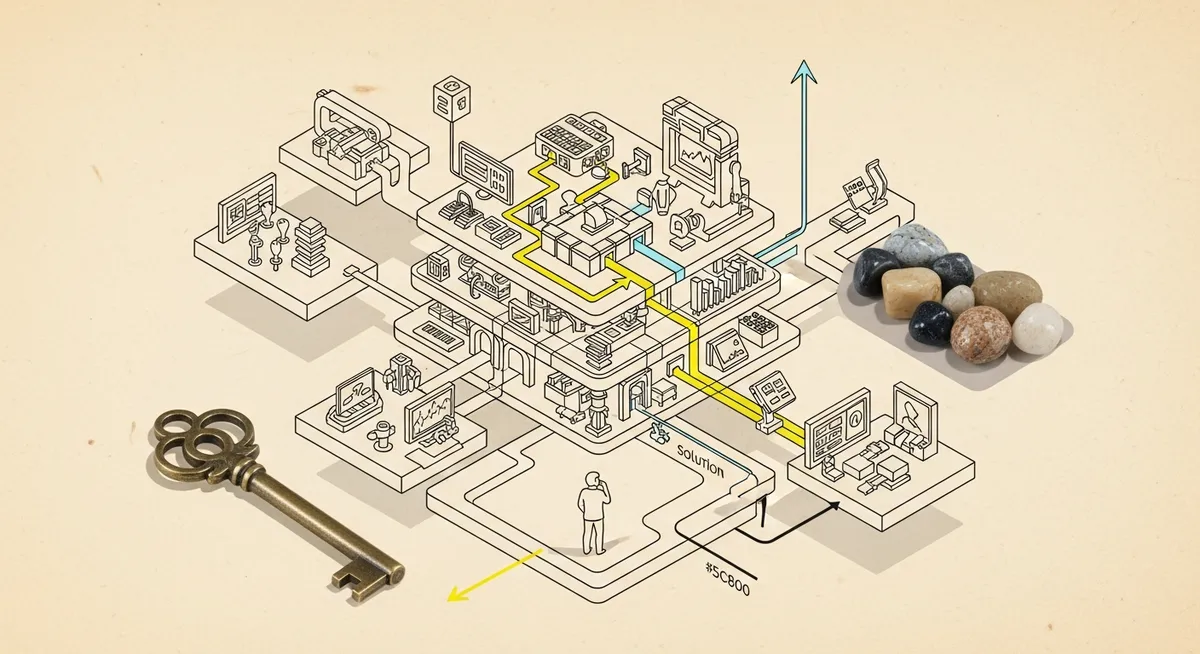

That’s the real shift behind Perplexity’s Agent API release on March 11, 2026: it frames agentic work as an execution problem, not a chat problem. A managed runtime for agentic workflows—one integration point that wraps search, tool execution, and multi-model orchestration—aims to replace the usual pile of services (router, search layer, embeddings, sandbox, monitoring) that quietly turns “we built an agent” into “we built a distributed system.”

And distributed systems fail in familiar ways. They get expensive. They degrade. They break at the seams where data freshness and permissions live.

If you only change one thing, change this: stop evaluating agents as model outputs and start evaluating them as workflows with unit economics, guardrails, and an audit trail.

Why this matters now: the market moved from “build an agent” to “run an agent”

There’s a telling data point in the research brief: 58.5% of agentic AI implementations used ready-to-deploy agents (Query 1 results). That’s not a vibe shift. It’s an operational one.

When teams stop hand-building everything, the bottleneck moves. It’s no longer “can we get a model to reason?” It becomes: can we run this thing inside real systems, with bounded actions, observability, and security during execution?

That emphasis shows up in 2026 developments. Zenity announced general availability of inline runtime security for AI agents on Microsoft Foundry on March 17, 2026, focused on real-time protection against data leakage and tool misuse (Query 3 results). Microsoft followed with an open-source Agent Governance Toolkit on April 8, 2026 to monitor and control agents in production, targeting OWASP risks during execution (Query 3 results). Different vendors, same signal: runtime is now the risk surface.

But the context is more complex. Reliability is still a constraint; the brief cites a Salesforce Commerce Cloud managed runtime degradation incident in October 2024 as a reminder that “managed” doesn’t mean “immune to outages” (Query 3 results). So the runtime conversation isn’t theoretical. It’s an uptime and trust conversation.

What an Agent API actually changes: fewer moving parts, clearer lanes, tighter control

Perplexity’s framing is useful because it forces a mental model. A traditional CPU loop is deterministic (fetch, decode, execute, store). An Agent API loop is different: the model receives an objective, decomposes it into steps, selects tools, executes, observes results, evaluates success, then iterates. Same concept—execution loop—different machinery.

Perplexity’s Agent API positions itself as an orchestrator for the full loop: retrieval, tool execution, reasoning, and multi-model fallback under a single endpoint, account, and API key. The practical appeal is simple. Fewer integration points means fewer places to lose state, permissions, logging, or cost controls.

It also bakes in tools that map to real enterprise needs: web_search with domain filtering (allowlist/denylist up to 20 domains), recency and date range filters, language filtering, and configurable content budgets; fetch_url to retrieve full page content; plus custom functions to connect to internal APIs and databases. Those are execution primitives, not chat features.

Seen from the other side, this is also a governance move. InfoWorld’s “Agent Tier” idea—separating deterministic lanes (rule enforcement/state changes) from adaptive reasoning—gets at why enterprises keep stalling after prototypes (Query 3 results). You want the model to propose. You don’t want the model to directly mutate critical systems without bounded actions and logging. A managed runtime can make that separation easier to implement consistently.

The ONE move to run this week: an “Agent Tier” holdout test for a single workflow

DemGenDaily readers don’t need another agent concept piece. They need a controlled way to answer one question: does an Agent API-managed runtime improve workflow outcomes in production conditions, without blowing up risk?

Primary tactic: run a two-lane experiment (deterministic lane vs agent lane) on one workflow that touches real systems, with a holdout and a stop-loss.

Hypothesis (make it falsifiable)

If we implement an Agent API-managed runtime for one high-friction RevOps workflow (agent lane) while keeping a deterministic, rule-based workflow as the control (deterministic lane), then cycle time per completed task will decrease and task success rate will increase because the runtime reduces tool-call overhead and enforces bounded actions with better observability.

Pick the workflow (keep it boring)

Choose something with clear start/end states and measurable outcomes. Examples that usually qualify:

- Inbound lead enrichment + routing decision + CRM update

- “Prep for sales call” research packet that pulls CRM context plus recent external context (Perplexity’s example pattern)

- Support ticket triage that classifies, drafts, and files the right internal fields (drafting is fine; auto-closing is usually not)

Setup / Owners / Tools

- Owner: Demand Gen Ops or RevOps (execution) + Security/IT (permissions review) + one Sales Ops stakeholder (definition of “done”)

- Tools: Agent API runtime (for the agent lane), existing workflow tooling (for control), logging/monitoring you already trust (don’t add three new dashboards)

- Audience: one segment only (e.g., inbound leads from a single product line, or AE team pod A)

- Timeline: 5 business days to implement + 10 business days to collect directional data

Launch: design the two lanes

- Deterministic lane (control): rules + fixed API calls + strict schema validation; no adaptive reasoning.

- Agent lane (treatment): objective-based agent loop with bounded tools. Use domain filtering and content budgets for any web retrieval to reduce “search sprawl.”

- Holdout: keep 10–20% of volume in the control lane no matter what. This is non-negotiable if the goal is incrementality, not vibes.

One more constraint: don’t let the agent directly write to your source of truth without a deterministic check. That’s the whole “Agent Tier” point—adaptive reasoning proposes; deterministic controls enforce.

What to measure (and what not to over-interpret)

Success = cycle time per completed task (median minutes from trigger to “done”).

Secondary metrics = task success rate (did it complete without human rework?) and cost per completed task (directional, not definitive—token dashboards alone won’t capture downstream rework).

Guardrails = error rate (tool failures, permission errors), and “data freshness incidents” (cases where the agent used outdated context). The research brief explicitly warns that without robust APIs, agents can deliver outdated info and fail in production (Query 2 results).

Stop-loss threshold = if rework rate increases by >10% versus control for two consecutive days, revert the segment to deterministic-only while you inspect logs and tool calls.

Readout: what results should look like if this is working

You’re looking for a pattern that matches what runtime optimization research suggests: fewer calls and higher success. The brief cites Agent Workflow Optimization (AWO) results of up to 11.9% reduction in LLM calls and up to +4.2 percentage points in task success rates across benchmarks (Query 1 results). Those are not guaranteed outcomes for your workflow. But they’re a reasonable directional bar for “is this worth continuing?”

Also watch for the less glamorous win: fewer brittle integrations. Practitioners warn that sales automation fails when CRM integrations are laggy, rate-limited, or fragile—and that failure erodes trust and burns developer time (Query 2 results). If the managed runtime reduces the number of components you maintain, the reliability payoff may show up as fewer incidents, not prettier completions.

Trade-off (say it out loud)

This test will likely reduce volume before it improves quality. You’ll add checks, logging, and bounded actions. Some runs will fail fast instead of “kind of working.” That’s good. It makes failure visible.

When this is wrong: if the workflow is already deterministic and stable (few branches, low ambiguity), an agent lane may add cost without improving cycle time. Use the agent where judgment and multi-step retrieval actually matter.

The kicker: “managed runtime” doesn’t remove risk—it moves it into the open

The promise of an Agent API-managed runtime isn’t magic automation. It’s something more useful: fewer hidden seams, clearer control points, and a workflow you can measure like any other GTM system.

In 2026, that’s the adult conversation. Security vendors are building inline protection. Microsoft is open-sourcing governance tooling. Architects are arguing for deterministic lanes around adaptive reasoning. And the uncomfortable truth remains: reliability incidents still happen, even in managed environments (Query 3 results).

So the job isn’t to “adopt agents.” It’s to decide—workflow by workflow—where an agent belongs, what it’s allowed to touch, and how quickly you’ll know it’s failing. That’s what a runtime decision looks like when pipeline is on the line.