In 2026, a weird thing is happening to “search success.” Content can influence a buying decision and never earn a visit. Not once.

The numbers explain why teams are rethinking the whole job description. Roughly 60% of Google searches end without a click (SparkToro/Datos, cited in “Answer Engine Optimization Statistics 2026”). And when AI summaries show up, they can lower clicks by 35% (same source). Meanwhile, AI Overviews are now appearing in nearly half of all searches (AEO Statistics 2026). That combination turns classic SEO reporting into a comforting fiction: rankings rise, impressions climb, and pipeline still feels softer than it should.

But the shift isn’t just “Google is changing.” People are changing. The research brief cites that 58% of users have replaced traditional search engines with AI-driven tools for product and service discovery, and 64% of customers are ready to purchase products suggested by AI (AEO Statistics 2026). This is what Answer Engine Optimization (AEO) is responding to: the moment where the answer becomes the interface, and the interface becomes the decision-maker.

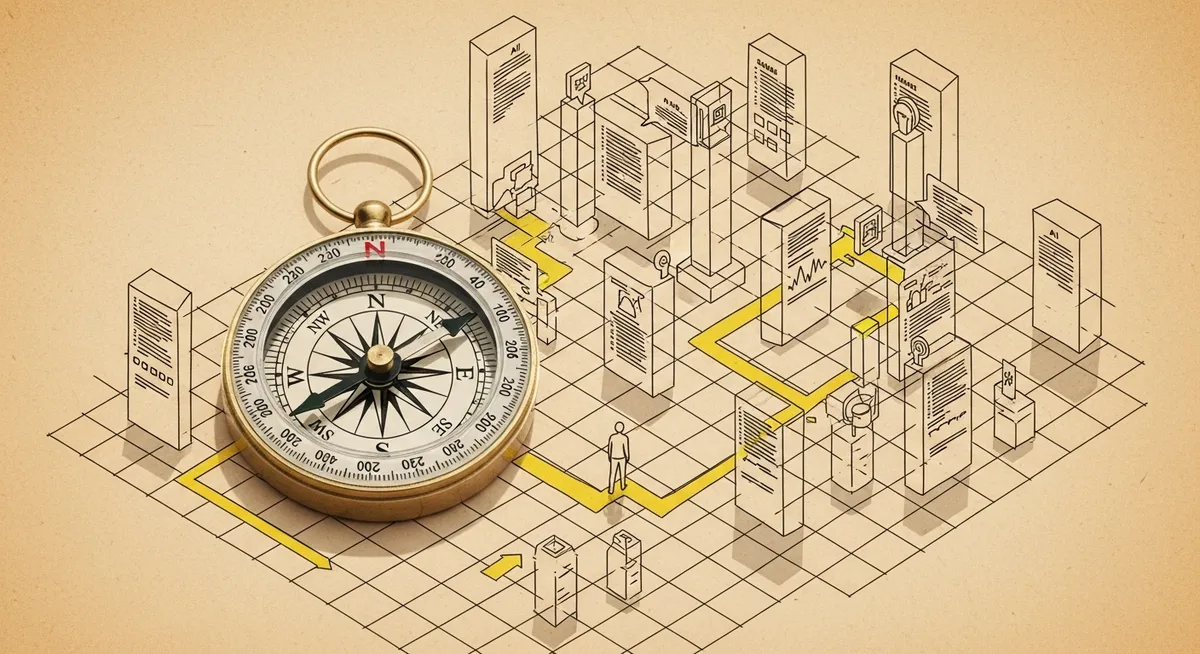

Here’s the real question that keeps demand gen leaders up: if discovery is happening inside ChatGPT, Google AI Overviews, Perplexity, or Gemini, how does a B2B team get “picked” as a source before the buyer ever reaches a comparison page?

The short version: structure content so AI systems can extract it, trust it, and cite it. The longer version gets more interesting.

The new SERP is an answer box that follows your buyer everywhere

AEO in 2026 isn’t a rebrand of SEO. It’s an adaptation to AI-driven search systems that synthesize responses directly in the interface, often with citations and often with no downstream click (Latest news developments in Answer Engine Optimization 2026). That’s why measurement is drifting from sessions toward visibility inside answers: citations, inclusion rates, and share of voice across answer engines (Latest developments, 2026).

And yes, that can feel like surrender. No click, no cookie, no retargeting pool. But the data also suggests a more nuanced reality: 63% of websites report traffic coming from AI search (AEO Statistics 2026). Some queries still send visits. Some don’t. The mistake is treating all queries like they behave the same way.

There’s another way to read the situation: the “top of funnel” has split into two lanes. One lane is still traditional—ranking pages, capturing visits, converting on-site. The other lane is invisible in GA4 unless a team goes looking—brand mentions and citations inside AI answers that shape shortlists upstream of the click.

So the practical goal changes. Not “rank for the keyword.” Not even “get the click.” In many categories, it becomes: be the source the model cites when it writes the answer.

What answer engines reward: extractability, clarity, and entity strength

Most AEO advice converges on one unglamorous truth: AI systems favor content that’s easy to lift cleanly. Expert commentary summarized in the research brief emphasizes answer-first structure (put the core answer near the top), clear headings, and structured formats like lists, comparisons, and step-by-step instructions (Expert opinions on AEO trends 2026). Long narrative paragraphs tend to underperform because they’re hard to quote without losing meaning.

That creates a tension for content teams. The web has spent a decade rewarding “comprehensive guides.” Answer engines reward something closer to technical writing: precise, modular, and unambiguous. Not cold. Just clean.

Start with the simplest retrofit that works on existing pages: open each key section with a direct, self-contained answer. Then support it with context. A human can skim. A model can extract. Both win.

But structure is only half the story. The bigger shift in 2026 is moving from keywords to entities and context. The research brief describes the emphasis as “entity clarity and semantic meaning,” supported by consistent brand terminology and internal linking (Expert opinions on AEO trends 2026). In plain language: if a site uses three different names for the same concept, AI systems have to guess what’s meant. Guessing is where brands get misrepresented—or omitted.

To understand why, it helps to go back to how these systems assemble answers. The uploaded document notes that many platforms rely on retrieval-plus-generation approaches (often described as retrieval-augmented generation), pulling from sources and then synthesizing. Different engines pull from different places. For example, the document reports that Google AI Overviews draw 77% of citations from the top 10 organic results, ChatGPT shows an 87% match rate with Bing’s top 20 results, and Perplexity has 60% of citations matching Google’s top 10 (uploaded document summary). Translation: classic SEO still matters, but now it’s feeding a second system that writes the “front page” for the user.

Multi-modal AEO and the end of “just publish the blog post”

In 2026, text-only pages are starting to look like single-sensor instruments. Expert guidance increasingly recommends multi-modal optimization—images, tables, charts, video, audio—because AI engines analyze structured elements and visuals to reduce ambiguity and assemble better answers (Expert opinions on AEO trends 2026).

This isn’t about decorating a post with stock imagery. It’s about giving the model stable, parseable artifacts. A simple comparison table can do more for citation likelihood than three extra paragraphs, because it turns interpretation into extraction. A labeled diagram can reduce category confusion. A short clip can become the “canonical” explanation when the engine decides what to surface.

But this is where teams often stumble: multi-modal content multiplies production overhead unless it’s operationalized. The better approach is to build a repeatable package. One page template. One table pattern. One chart style. One naming convention. Consistency is a ranking factor for humans too—just in a different way.

And because AEO crosses disciplines, it forces real collaboration: content, technical SEO, web development, and digital PR all touch the outcome (uploaded document summary). If that sounds like a governance problem, it is. AEO is becoming less of a content tactic and more of a revenue-architecture concern, which is exactly why it’s showing up at the executive level (uploaded document summary).

How to measure AEO without lying to yourself

Clicks won’t disappear, but they’re no longer a complete proxy for influence. The research brief flags the move toward citations, inclusion rates, and “share of voice” across AI engines (Latest developments in AEO 2026). That shift is partly defensive—publishers have reported organic traffic declines of 20–40%, with some exceeding 70% (AEO Statistics 2026). When the baseline is sliding, a flat traffic chart can hide real loss.

At the same time, measurement is getting more tool-driven. The brief notes emerging AEO tooling such as SE Visible and Writesonic for tracking citations and visibility, plus benchmarking tools like HubSpot’s AI Search Grader (Latest developments, 2026). The tools matter less than the discipline: define what counts as a “win” (citation, mention, inclusion), decide which engines matter for the category, and connect those signals to downstream conversions where possible.

One caution belongs on every dashboard: attribution will be messy. Citations and impressions can be harder to tie to revenue than clicks, and the brief explicitly calls out the need for governance before budgets get reallocated (Latest developments, 2026). That’s not a reason to ignore AEO. It’s a reason to set standards early—while competitors are still arguing about whether it “counts.”

The story started with a depressing stat: most searches don’t end in a click. But the more useful framing is this: the click used to be the moment of truth. In 2026, the moment of truth is earlier—when an answer engine decides which sources are safe to cite. If the buyer never lands on the site, the citation is the visit. The mention is the impression. And the absence is the loss.