Creative is supposed to be the big lever. Nielsen has reported that creative quality can drive 56% of a campaign’s sales ROI, and Google has cited creative as determining 70% of campaign success (Search Results: recent statistics on creative performance in marketing campaigns 2023 [2]). Those numbers are why teams reach for the creative brief the moment results wobble.

But there’s a contradiction hiding in plain sight: if creative matters that much, it’s also the easiest thing to scapegoat. It’s visible. It’s subjective. And it’s often the only part of the system that doesn’t require a meeting with RevOps to change.

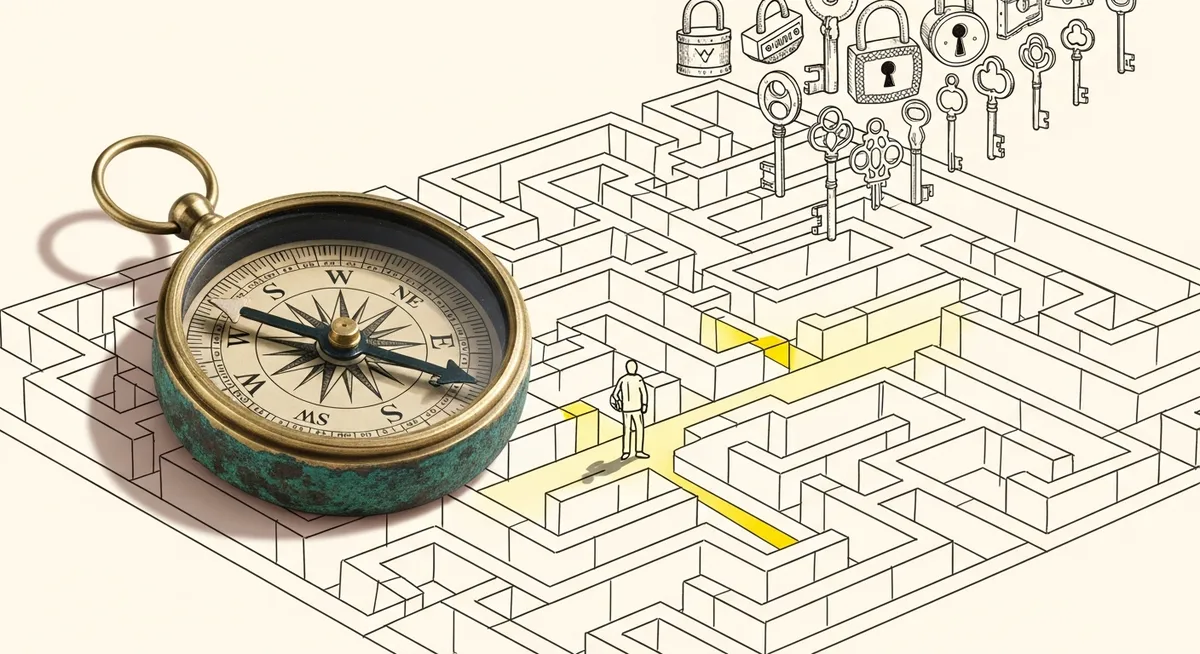

Gonçalo Prates, who shares conversion-focused campaign teardowns and frameworks on LinkedIn, put it plainly: before flooding a team with new creative requests, run a quick diagnostic. His three steps are simple on the surface. In practice, they’re a demand gen manager’s way of protecting time, budget, and internal trust.

Why this matters now: creative scrutiny is up, but waste is still everywhere

Industry attention has swung back toward creative. In 2023, creative effectiveness rebounded versus 2022, with reported lifts in brand appeal (+8%), emotional intensity (+7%), and purchase uplift (+5%) (Search Results: recent statistics on creative performance in marketing campaigns 2023 [1]). Marketers also reported increasing focus on creative quality (Search Results: recent statistics on creative performance in marketing campaigns 2023 [7]).

That rebound creates pressure. When a channel underperforms, the default move becomes “make new ads.” Yet expert commentary summarized in the research brief argues that underperformance is often misdiagnosed as a creative problem when root causes are flawed assumptions, weak positioning, poor audience understanding, or measurement gaps (Search Results: expert opinions on diagnosing marketing campaign failures and creative effectiveness [1][2][3]). Some estimates even peg 20–30% of spend as lost when campaigns are built on flawed data/assumptions (Search Results: expert opinions… [1]). That’s not a design problem. That’s an ops problem.

So the goal isn’t to defend creative. It’s to figure out whether creative is actually the constraint before teams burn cycles “fixing” the wrong thing.

Step 1: Read leading indicators against your own baseline, not someone else’s benchmark

Prates starts where most teams already look: leading metrics like CTR and engagement rate. The twist is what he ignores. He advises against obsessing over industry benchmarks and instead comparing performance to your own historical baselines.

That’s more than a preference. It’s measurement hygiene. Benchmarks collapse context: audience temperature, channel mix, auction dynamics, brand awareness, offer strength. A clean baseline doesn’t. It reflects the reality of your account, your targeting, your creative system, and your seasonality.

Then comes the diagnostic question that keeps teams honest: are those leading metrics improving after each round of creative iterations? If yes, Prates argues the problem likely isn’t the ads. If they’re flat or trending down, he names three plausible culprits: targeting (wrong people), messaging (angle doesn’t land), or creative (doesn’t grab attention).

But the most useful part of Step 1 is the branch it creates. Because if CTR and engagement are improving and conversions are still weak, the failure is happening after the click. That’s not a vibe. That’s a location.

Step 2: Diagnose post-click behavior before rewriting another headline

Step 2 forces the conversation out of the ad account and onto the landing experience. Prates points to conversion rate and form start-to-completion rate as the core signals. If people click and then don’t convert, he lists three common causes: landing page friction, expectation mismatch, and an offer problem.

Landing page friction is the unglamorous stuff: form length, page speed, conflicting CTAs, weak value proposition. The key is that friction is testable. Prates suggests a practical validation: test a lead gen form native to the ad platform. If conversions jump, the landing page is the bottleneck, not the creative.

Seen from the other side, Step 2 is also a cross-functional audit in miniature. The research brief notes that experts recommend diagnostics before changing creative, including cross-functional audits and controlled testing (Search Results: expert opinions… [1][3]). Post-click behavior is where marketing ops, web, analytics, and sales handoffs collide. That’s why it “silently bleeds budget,” as one commenter on Prates’ post, James Taylor, put it.

“This diagnostic framework is spot on, especially Step 2. In my experience, post-click behavior is where most campaigns silently bleed budget.” — James Taylor

Taylor adds a specific operational trap: lead response time. He claims data shows responding within 5 minutes makes a team 21x more likely to convert versus waiting 30 minutes, and suggests adding a “Step 4” to audit speed-to-lead. The exact statistic isn’t sourced in the provided research brief, but the underlying warning is still the right one for ops-minded teams: a campaign can look “broken” when the real failure is downstream execution.

And that brings the diagnostic to its hardest step, because it challenges sunk costs.

Step 3: If the offer is weak, creative work just makes failure more efficient

Prates’ final step is blunt: look at the offer. The action you’re asking a prospect to take—book a demo, request an assessment, download a guide, attend a webinar—has to feel worth it to them, not to the team that built the asset.

He calls out the moment many teams get stuck: they’ve already spent time and money on the offer, so abandoning it feels irrational. So they optimize everything around it instead. New creatives. Landing page tweaks. Button color debates. Anything but questioning the thing being sold.

One commenter, Riya Madaan, echoed that point: “It’s rarely the targeting or creatives, it’s just that the offer isn’t strong or doesn’t match where the buyer is. Asking for a demo too early is probably the biggest miss I see all the time.” That aligns with the research brief’s “clicks but no conversions” pattern, which experts connect to positioning and messaging problems—and the need to simplify differentiation into one or two sentences before iterating creative (Search Results: expert opinions… [2]).

Here’s the uncomfortable operational translation. If the offer doesn’t map to buyer intent, creative quality can still inflate the wrong outcome: more clicks into a dead end. That’s how teams end up “proving” creative is broken, when creative is doing exactly what it’s supposed to do—earning attention.

And the data doesn’t let anyone off the hook. If creative truly contributes 56% of sales ROI (Nielsen) and 70% of success (Google) (Search Results: recent statistics… [2]), then creative deserves investment. Just not as a first reflex. The better order is diagnostic discipline first, creative iteration second.

That’s the circle back to the opening contradiction. Creative is a huge lever. Exactly. Which is why it’s worth being sure you’re pulling the right one.